Rock Falcon, the poster boy for Softimage’s Face Robot technology, takes digital acting to new heights with expressive facial animation.

The problem is a classic one for animators-many of whom have fallen back on the argument that there is no place for hyper-realistic human animation. When it comes to animating humans, it’s better to opt for more stylized faces so the viewer doesn’t get distracted. And certainly there is a whole beautiful body of work that supports this point including Disney’s Snow White, Hayao Miyazakiís Spirited Away, Pixar’s melding of classic squash-and-stretch with 3D in The Incredibles. But then, an animated character like Gollum comes along, a combination of talented acting by Andy Serkis, and stellar animation by at least 18 animators working for Weta. The bar is moved.

At Blur Studios, in Venice Beach, CA, the quest for good facial animation is close to an obsession. However, Blur is not the kind of studio that puts an army of technicians to work on specialized software. Rather, Blur prides itself on turning out high-quality 3D animation on time and on budget. Its body of work includes mischievous animated critters such as the Academy Award nominee Gopher Broke and plenty of human character animation for cinematics in games such as X-Men Legends 2 Rise of Apocalypse.

Softimage’s Face Robot technology is being utiliized for many projects at Blur. Most recently, it was used to complete a series of game cinematics for Xmen Legend 2.

On a recent visit with Blur Studios, we talked with Blur’s President and Creative Director Tim Miller and Jeff Wilson, animation supervisor. Like so many people in the animation business, the people at Blur are friendly and funny when they’re not being driven by murderous deadlines. On this particular day, the people at Blur were taking an earthquake training course, though Tim mused that it seemed unnecessary to take a course in “running and screaming.”

When it comes to facial animation, Miller is opinionated and outspoken. He levels plenty of criticism at the tools that have been available from Alias, Autodesk, Softimage, and LightWave, saying the Blur team worked arduously on facial expressions, only to end up with animation they considered unworthy of their efforts. Wilson at Blur also was frustrated, especially when Miller pointed out places where the facial animation wasn’t working , particularly around the jaw and the mouth. “You can hack the eyebrows,” notes Wilson, “but motion capture wasn’t helping with the mouth.” Up to this point, Blur Studio’s animators were trying to make facial animation work through brute force. Jeff notes that at one point, they were up to using 100 markers to capture facial movement and still the results were not what they wanted. When trying to use morphs, too many steps were required to accomplish the movements of cheeks bulging, eyes opening and eyebrows wrinkling. Blur was discovering that you can’t model and mocap every single move. Brute force is not practical.

The solution, they were certain, was a matter of getting better software. “We wanted the software to do more of the work,” explained Wilson.

Blur has worked primarily with Autodesk Media and Entertainment’s 3Ds max, and Miller has been a believer in a single pipeline for as much of the production as possible. So Wilson took time of to focus on facial animation, first working Autodesk Media and Entertainment’s Character Studio. The Autodesk team pitched in to help, but the Blur team wasn’t getting the results it wanted and the complexity of what they wanted to achieve slowed down the software. Somewhere around this time, the guys from Blur ran into the guys from Softimage’s Special Projects Team. (Venice, California is, after all, a small town especially if you’re working in computer animation.) And, the Softimage Special Projects Group was formed.

Just around the corner from Venice’s famous Muscle Beach, in offices that, ironically, were formerly occupied by Arnold Schwarzenegger, the Softimage Special Projects Group tackles customer problems such as creating realistic facial animation. Yes, the heavy lifting for facial animation is now being carried out in Arnold’s former workout room.

In those offices, Michael Isner leads the team working on facial animation, which includes Thomas Kang, Dilip Singh, and Javier von der Pahlen. Isner and von der Pahlen both have backgrounds in architecture and Kang has worked in interface design. Singh is a facial production expert, and he and von der Pahlen both have production experience and a good idea of what customers are going through. They have a larger backup group at Softimage HQ in Montreal.

By the time the Softimage Special Projects Team met Blur, Michael Isner said his team was ready to tackle solving “tens of hundreds of extremely hard problems.”

At first there was some concern about turning to software from Softimage since Blur’s pipeline has been built around 3ds max. Tim Miller laughs in retrospect. “These guys were scared to come to me with something from another company.” Miller, a veteran of the complicated problems faced by big studios with spaghetti pipelines, compounded by proprietary tools, vowed that Blur would avoid that situation by sticking to a simplified pipeline, using software tools from one company. But, once he was convinced that the Softimage team could help with the problem of facial animation, he was willing to give it a try. Says Miller: “Hey, I said, we tried. We put in a good faith effort, have at it.”

The relationship between Softimage’s special Projects Group and Blur is described by both as a super accelerated beta program and, in fact, Blur’s input helped the Softimage group take its Face Robot facial animation technology to the final stages of product development. Like Wilson, the Softimage team believed that the secret to facial animation was in building a system that understands how faces work so that th animator could work creatively with less focus on the mechanics of the process. But Isner and Kang both stress that the input from Blur’s animators gave them a critical understanding of how facial animation software needs to work to be truly useful to animators.

Thomas Kang with skull, Gregor vom Sheidt, Michael Isner, Javier von der Pahlen, and Dilip Singh of Softimage in the Special Projects studio near Muscle Beach.

Thomas Kang with skull, Gregor vom Sheidt, Michael Isner, Javier von der Pahlen, and Dilip Singh of Softimage in the Special Projects studio near Muscle Beach.

“We want to be in the acting business,” said Isner who believes Face Robot can open the door to a new community of graphics artists-people who will make facial animation a specialty. And, just as there are Inferno artists, people skilled in using Autodesk’s Inferno effects software, there will be Face Robot artists.

Softimage|Face Robot differs from traditional modeling and animation tools, which have evolved as mechanical assemblies that move via software levers and pulleys or along paths. Instead, Face Robot enables soft tissue animation. When an animator grabs a control point and pulls, the face follows the point in a natural way, and the whole face is involved. Grab one side of the mouth and pull up-you get a sneer. Create a smile and the cheeks bulge, the eyes crinkle. Kang compares Face Robot to a Google app. “It’s simple on the outside, but there is a lot going on under the hood.”

A common problem in motion capture is the requirement for up to a hundred control points, which can cause lengthy set up times. Face Robot reduces the complexity and time of this process by using only 32 points. The result is a quicker process and better control over editing afterwards. A head created with any 3D modeling tool, for example, can be brought into Face Robot, where the key 32 facial landmarks are selected on the model. Face Robot includes an interface that prompts users to select the points such as corner of mouth, corner of eye, center of eyebrow, etc., and motion capture or keyframe animation can be used to drive a new set of handles that are optimized to pull the face around like a piece of rubber. Through being bundled with the entire programming API, modeling and character setup environment within Softimage XSI it has a great deal of flexibility for studios to fine-tune the facial system for their own needs. The process of incorporating additional rigging over the Face Robot solver allows studios to make the system resemble their own internal facial animation processes an interfaces. Face Robot also includes the ability to work with motion capture data, as well as perform keyframe animation. It is a superset of Softimage XSI and includes a complete environment for facial animation, with tweaks to the XSI core.

At Siggraph 2005, Face Robot was demonstrated by Rock Falcon a tough-guy character created by Blur Studios. His great dramatic moment comes when he rolls a watermelon seed around in his mouth, positions it, spits it out and turns to the camera with a satisfied smile. Blur’s Jeff Wilson, wearing mocap markers, supplied the motion for Rock’s big moment. He notes that the little smile at the end was probably involuntary but it’s a big part of what he likes about Face Robot. “The face stays live.” Wilson notes that one drawback of painstakingly animated faces is that they can go dead and flat between movements. With its underlying network of interconnected vertices, Face Robot does not go dead. In an interview with Softimage’s site XSI-base, Jeff Wilson notes “The human face is always moving unless it is very relaxed. Even when ‘hitting’ an expression in real life, the muscles will settle or twitch a bit. There are lots of very subtle adjustments that can happen after you reach an expression, and that’s what makes the performance interesting.”

So while Face Robot has all the capabilities of XSI, it also has its own unique engine for faces. Underneath it all, there is math. Michael Isner says the engine for Face Robot is essentially a solver with a collection of algorithms tackling the problems of facial movement. “We parametized the problem.” To meet those parameters, however, Face Robot requires a certain level of modeling. In most instances, says Isner, users will bring a head into Face Robot and they may have to fine-tune it to meet the expectations of the system’s engine. Face Robot has tools for modeling and sculpting the head beyond what is originally brought into the system. Being realistic, Isner says Face Robot could make the lives of animators more difficult as they get used to the initial hurdle of having to create a higher level of facial detail. It will require expertise, but the work put into preparing the head for animation will be rewarded with more flexibility, and power, when it comes to actually creating animation.

Rock Falcon, the poster boy for Softimage’s Face Robot technology, takes digital acting to new heights with expressive facial animation.

Rock Falcon, the poster boy for Softimage’s Face Robot technology, takes digital acting to new heights with expressive facial animation.Clearly the effort has paid off for Blur. Jeff Wilson notes that the company’s productivity has skyrocketed. Wilson says that animators at Blur have been able to turn around about one second of animation for every hour of animator time, including setup. It’s a new equation for the company.

The company believes that the ability to animate faces better will also drive new business. Where, in the past, a lot of work went into downplaying the face in animation by focusing, instead, on the motion of the body to convey most of the performance, now they could use the face and take advantage of the emotional impact that faces can provide.

Likewise, it doesn’t take much to get Isner talking excitedly about the potential for facial animation and for Softimage. The ability to create Face Robot comes to a large degree from the painful rewriting process that took Softimage to the next level with XSI. One of the new features of XSI 5 is Gator, the ability to apply animation from one model to another. This can be especially powerful in the case of facial animation, and especially facial animation created with Face Robot. Performances can be saved and because of the consistent use of 32 markers, they can be easily transferred between faces. It’s even possible that an actor’s head can be scanned, captured, mo-capped, and re-used for additional footage.

Jeff Wilson notes that working Face Robot into Blur’s work flow has been reasonably simple. In the case of Face Robot, they are easily able to bring in a head modeled in 3ds max. At that point though, Blur tries to avoid doing additional modeling in XSI. If a head needs more work, they’ll usually go back out to 3ds max to do the additional modeling.

>Face Robot supports a variety of output formats, allowing users to work with different modeling and animation products for their entire project. Of course, notes Isner, the process would be naturally easier when the pipeline is based on XSI.

Just as Blur Studios is enjoying a period where they have an edge over the competition with Face Robot, before the product is officially rolled out, Softimage believes they have an edge with Face Robot. Softimage Vice President Gregor von Sheidt, former CEO of Alienbrain who came to Softimage with Alienbrain’s acquisition, will be leading the introduction of the new product. Face Robot isn’t going to be thrown to the dogs as a low cost module. Rather, von Sheidt says Face Robot is a high-end tool and will carry a premium price. The price tag will be closer to the Softimage of old than the XSI of today. It’s not a product for everyone and Softimage is not going for volume. Instead, Face Robot will be offered to key customers first. “We want users to have a good experience and we need to be sure we can support them.

Blur used Face Robot in the animation pipeline when creating the Brothers in Arms television commercials for its client Activision.

Obviously, Face Robot is enjoying a honeymoon. As of this writing only a few people have access to the software and most of them work for Blur Studios or Softimage. Blur helped develop it and they do see room for improvement, “especially around the mouth,” says Tim Miller who apparently says this a lot because everyone standing around him will sigh, roll their eyes just slightly, and give a resigned nod. It seems clear though that Face Robot really will change the face of animation. It could have the effect of democratizing facial animation in the same way new price points have expanded the universe of 3D animators.

|

Every st

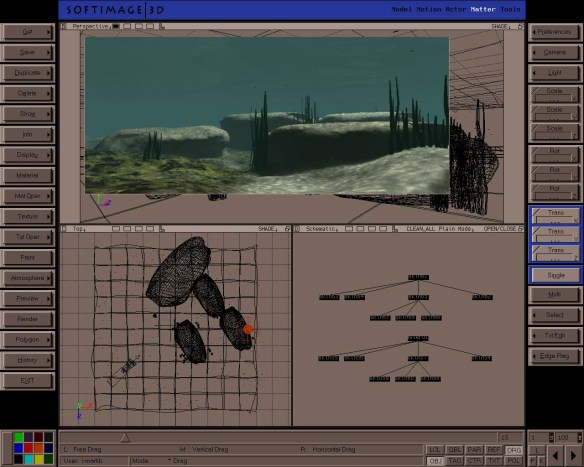

Every st The Render Tree, the Animation Mixer and the vastly im

The Render Tree, the Animation Mixer and the vastly im “With the Bubbaloo cat character that we’ve been focusing on most recently, we had some animation on which another of our animators had worked. With an earlier setup version, it would have been far more difficult to bring the animation across to another system but, thanks to the toolset in SOFTIMAGE|XSI, it was a breeze to transfer and reuse. More than that, one of the scenes of the Bubbaloo cat included some motion capture work. When the clients saw it initially, they didn’t care for some of the larger motions we’d used, so we used the Animation Mixer to maintain the basic motion capture l

“With the Bubbaloo cat character that we’ve been focusing on most recently, we had some animation on which another of our animators had worked. With an earlier setup version, it would have been far more difficult to bring the animation across to another system but, thanks to the toolset in SOFTIMAGE|XSI, it was a breeze to transfer and reuse. More than that, one of the scenes of the Bubbaloo cat included some motion capture work. When the clients saw it initially, they didn’t care for some of the larger motions we’d used, so we used the Animation Mixer to maintain the basic motion capture l